Article: Friday, 12 April 2019

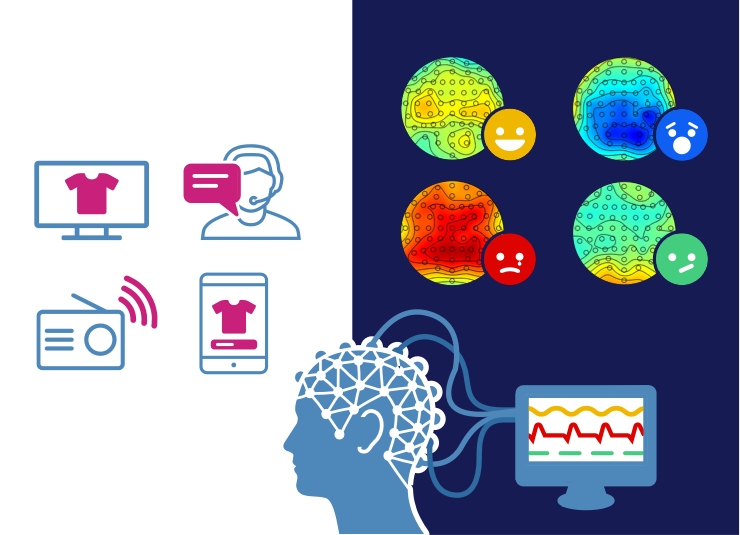

Emotions play a key role in our behaviour. Studies have shown that the majority of the decisions we make are driven by emotions, and not merely by ratio. But measuring emotions has been proven difficult. PhD candidate Esther Eijlers, Dr Maarten Boksem and Prof. Ale Smidts of Rotterdam School of Management, Erasmus University (RSM) have developed a new method to measure and track emotions in the brain in real time with electroencephalography (EEG). For the first time, they can see, moment to moment, which emotions people experience when they watch something. They have described their finding in the research paper Implicit measurement of emotional experience and its dynamics.

Measuring emotional experiences has been challenging. When participants are requested to self-report on their emotions during an event, they might give socially desirable, but untrue answers. They may not want to express exactly how they feel. Even if that’s not the case, it is known that many people are not very good at reflecting on their internal mental processes. They simply may not be able to put their feelings into words accurately, and asking them to reflect may change the experience itself. Also, people’s judgement and behaviour may be influenced by subconscious emotions, as affective processes mostly occur outside of people’s awareness.

Neuroimaging methods may provide a solution to this problem by recording brain activity that underlies both conscious and unconscious processes. Functional magnetic resonance imaging (fMRI) has been used successfully to localise neural networks involved in many psychological processes. But fMRI shows less accurately how the neural processes develop over time, whereas emotions are short-lived experiences.

Instead of using fMRI, Esther Eijlers and her colleagues theorised that electroencephalography (EEG) would be more suitable to measure emotions in the brain over time. They collected EEG data from 40 students while they watched short videos that were each designed to trigger a specific emotion: happy, sad, fear and disgust. Participants viewed five short clips for each of the four emotions.

With machine learning techniques, the researchers then classified the emotional content of these clips based on the frequency and topography of the EEG signal.

Esther Eijlers: “Additionally, we were able to see in the EEG data how the emotional responses actually differed from each other in terms of patterns of frequency distributions and topography of the EEG signal. This allowed us to speculate about underlying (neural) processes that we measured, associated with the different emotions.”

The ultimate validation that the classification algorithm worked, came when the researchers showed the participants a clip from the animated Pixar movie ‘Up’. This movie clip presents a summary of the lives of a man and woman who get married, and grow old together.

Esther Eijlers: “This clip was especially included because of its complete story arc, in order to track dynamic changes in the emotional response triggered by viewing the clip. We estimated the average happy and sad response across participants, second-by-second during the movie clip. It appeared that the emotional response, which was estimated based on the EEG data, was indeed able to accurately track the main ups and downs of the narrative. This illustrates that our classification approach could be generalised to other videos that are not limited to triggering mainly one emotion to an extreme extent.”

Being able to accurately measure emotional experiences can serve many practical purposes. It can, for example, show film makers, TV producers, advertisers, video game designers and web store designers if their product has the intended emotional effect on people. It enables tracking customer experience over time. When this method becomes commercially available in the future, it will be a valuable tool for more effective storytelling and, thus, customer experience.

The PhD dissertation of Esther Eijlers, Emotional Experience and Advertising Effectiveness: on the use of EEG in marketing, was published January 30 2020 as part of ERIM Ph.D. Series Research in Management.

Science Communication and Media Officer

Rotterdam School of Management, Erasmus University (RSM) is one of Europe’s top-ranked business schools. RSM provides ground-breaking research and education furthering excellence in all aspects of management and is based in the international port city of Rotterdam – a vital nexus of business, logistics and trade. RSM’s primary focus is on developing business leaders with international careers who can become a force for positive change by carrying their innovative mindset into a sustainable future. Our first-class range of bachelor, master, MBA, PhD and executive programmes encourage them to become to become critical, creative, caring and collaborative thinkers and doers.