Article: Tuesday, 12 July 2016

Global warming, sustainable energy production, financial market volatility – we don’t have the answers to most of these problems yet, but most experts in these fields agree that they will only be resolved through the positive interaction of hundreds of social, economic, political, and technical factors.

Humanity may have created these “wicked problems,” as these kinds of challenges are called, but we seldom have a good idea about how to solve them. Where should you start? What should you do? There are too many connections and too many interdependencies for anyone to grasp the entire ecosystem at once. Traditionally, policymakers only learned after the fact whether they had done the right thing – and often even in hindsight, the causation was often debatable.

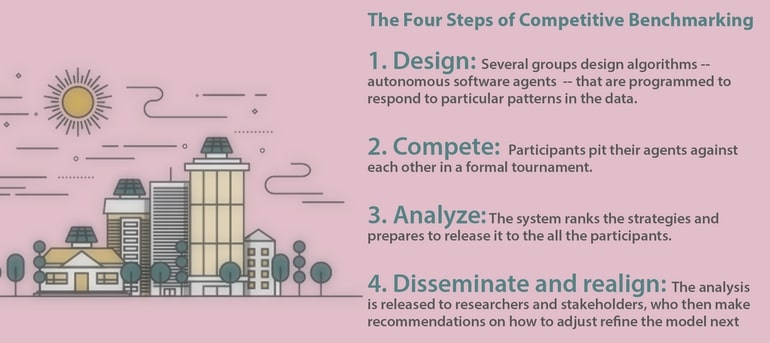

We have developed a tool that we believe makes responding to wicked problems somewhat easier: Competitive Benchmarking (CB) is a software modelling tool that allows researchers to build models that incorporate data from a variety of sources, such as usage data from customers, production patterns from producers, and regulatory constraints. Using past and present data gives us the opportunity to test alternative futures, the counter-factual risks.

In our recent paper, Competitive Benchmarking: An IS Research Approach to Address Wicked Problems with Big Data and Analytics, my co-authors Alok Gupta and John Collins of the University of Minnesota, reviewed the two decades of work that led to CB.

John and I got into the global problem-solving business by accident. We began entering artificial intelligence competitions in the 1990s, but in the 2000s realised that our methods could be used to study real-world problems, such as modelling supply chain risk.

Using this set of tools to look at supply chains was very successful, and in 2009, during a workshop on sustainable energy in Germany, we were encouraged by the German government and other energy stakeholders to apply the CB technique to understanding smart girds.

Eventually, this project grew into the Power Trading Agent Competition (Power TAC), a competitive simulation of retail electric power markets that helps us evaluate market-based approaches to energy sustainability. By using real data to model an electric distribution system, the economic system, and retail electricity tariffs on this common platform, Power TAC participants are able to learn more about how those moving parts interact, and test ways in which multiple markets might be tweaked toward greater levels of sustainability and stability.

Some 17 research groups around the world have participated in one or more competitions, and many more use it for their own research outside the context of the annual tournament, including scholars, utilities executives, and energy customer lobby groups.

What’s unique about the Power TAC system is not that we’ve built a market model – people build all kinds of models these days – but that CB allows us to compare market designs and study the decision problems that might arise as the proportion of renewable energy resources, electrified transport and climate-control options grows. CB enables us to pit business strategies against each other in order to understand what business opportunities might open up as the energy market evolves from centralised monopolies that send energy out to the system’s edge to a decentralised system of prosumers who both use and produce power.

It has helped our understanding of which regulatory structures would work best as the dynamics of this new market are largely uncharted territory: in Germany, for instance, where there were once four energy companies, there are now over a thousand.

Every year, we have kept working to make the process more realistic. In 2012, we changed the pricing and tariff structure. In 2013-4, we built behavioural models that operate within the simulation framework, whose behaviour closely matches the observed behaviour in these datasets on users of thermal and battery storage. And in 2014-2015, we introduced statistics on driving behaviour to better understand how people might use electric cars. This year, we are adding peak-demand pricing as a way to manage demand spikes, a key issue faced by most power grid managers.

The Competitive Benchmarking simulation we use for the Power TAC is divided into three parts:

Real-world data from a variety of sources, such as a social media experiment on electric-vehicle recharging preferences, which is integrated into the model.

A simulated competitive retail power market in a medium-sized city, in which consumers and small-scale producers may choose from among a set of alternative electricity providers – autonomous software agents programmed by individual research groups.

A model of a regulated utility that owns and operates the physical facilities of the infrastructure and manages the supply and demand of the distribution network.

In its current incarnation, Power TAC models the economics of an electric distribution system but not its actual physical flows. This is because much work has already been done on the physical flows, but very little has been done on alternative market designs and policies. In particular, it models future retail electricity markets, allowing us to experiment with alternative policy scenarios that improve sustainability but are too risky to immediately apply in the real world.

Tournament scenarios typically simulate two months of market dynamics in two hours of real time activity, pitting intelligent agents programmed with a wide variety of kinds of expertise to study the effect of the competitive market on brokers, markets, and customers.

Although we call Power TAC a competition, everybody wins: all the data from championship tournaments are publicly available for analysis. If somebody wins in a way that turns out to be counterproductive, that tells us all that we need to change the rules a little. For instance, two years ago, we found that one of the agents had discovered a way to exploit tariff terms so as to extract rents from customers in a way that shouldn’t have been possible.

One advantage of the CB process is that it makes it easier for researchers to profit from domain knowledge of other stakeholders. Having all that is known about the functioning of a system gives researchers a better idea earlier about what areas are likely to be the most common and interesting problems, and helps give them a common vocabulary and view of the system’s overall structure. A robust CB platform also makes it easier for researchers to test their theories because they don’t have to take time to build and test a new set of applicable benchmarks.

Now in its seventh year, Power TAC is starting to see more utility industry participation as well as entrants from outside energy altogether, machine learning experts, for example. Indeed, such as a team from Essen, Germany, actually won on its first try.

Best of all: scholars not directly involved in the project have still benefited from it. Our results have been cited in over 150 papers, not including the work of core competition members. (We’ve even met a few people at non-TAC events who tell us, when we tell them we work on the energy market, about a nifty tool they’ve found – which turned out to be the Power TAC!)

CB is not for everybody. Building a platform like Power TAC requires real expertise, specialised knowledge, and a lot of co-operation among a large number of different groups. However, given that wicked problems are by definition more than one organisation can handle, CB seems to us better than a lot of the alternatives. As the American cynic H.L. Mencken once said: ‘For every complex problem there is an answer that is clear, simple – and wrong.’ By inspiring multiple questions rather than one question and multiple answers rather than one answer, Competitive Benchmarking offers a more effective way forward.

Competitive Benchmarking: An IS Research Approach to Address Wicked Problems with Big Data and Analytics, written by Wolfgang Ketter, Markus Peters, John Collins, and Alok Gupta, is forthcoming in the Journal of Management Information Systems Quarterly (P).

Science Communication and Media Officer

Rotterdam School of Management, Erasmus University (RSM) is one of Europe’s top-ranked business schools. RSM provides ground-breaking research and education furthering excellence in all aspects of management and is based in the international port city of Rotterdam – a vital nexus of business, logistics and trade. RSM’s primary focus is on developing business leaders with international careers who can become a force for positive change by carrying their innovative mindset into a sustainable future. Our first-class range of bachelor, master, MBA, PhD and executive programmes encourage them to become to become critical, creative, caring and collaborative thinkers and doers.